Cognitive biases mean Astra Zeneca is on the nose rather than in as many arms as it might have been.

Still hovering at about 20% across Australia, down from a high of about 35%, vaccine hesitancy remains high when considering the rising risks from COVID-19, and the Delta strain, in particular.

This is also not entirely surprising. Vaccine hesitancy makes sense because it’s human nature. Or more specifically, it makes sense that we are scared of the very thing that could save us when you consider the cognitive biases we filter the world through, including how we make decisions on what is more likely to kill us.

But while it’s hard to change the way we think, behaviour change approaches may have answers to prompt us to change what we do.

People simply don’t think straight. About vaccines, or in fact most things.

Psychology and social science have shown over and over again that people are not all that rational, starting with seminal research in the 1970s and coupled with a few more recent Nobel prizes in economics that bring the point home. (Yep, that’s economics, the science of how we count and consider costs and benefits, likewise concluding that human beings can’t and don’t accurately).

Our brains trick us.

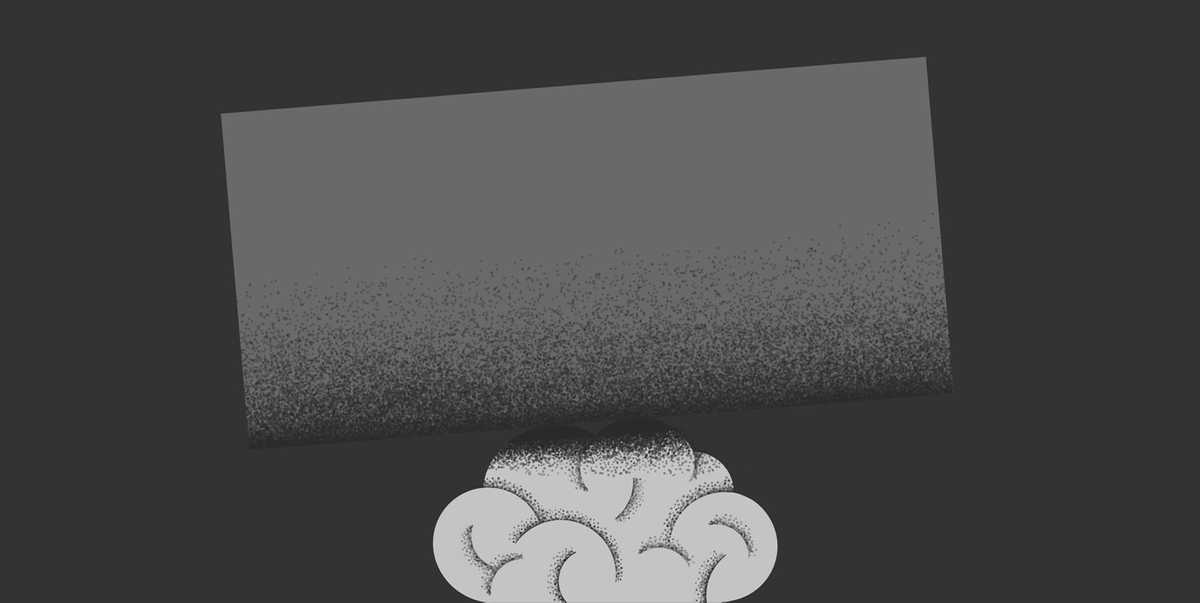

So, tell me which line is shorter? Quick, in 5 seconds or less. No rulers allowed.

If you’re like most people, you’ll see line B as shorter. You might have also guessed that this is an optical illusion and both lines are actually the same length. (I can’t fool you so easily!) But glance back, and your brain will still immediately perceive one line to be shorter than the other. Even when you’ve taken out the ruler and measured it. It’s hard to override that initial response.

While this is just two simple lines, it’s an effective demonstration of how difficult it is for our hard-working, rational brain to entirely overrule our fast-working, lazy brain, that insists on seeing the line the way it does.

That is to say, it can still seem like what we know rationally is not equal to what we see, what we feel, is right.

And that’s just how we look at two lines.

Behavioural sciences and Wikipedia have now labelled hundreds of cognitive biases: the faulty ways in which our fast-working brain filters and comprehends the myriad of information around us.

It can be smarting to think that we can’t rely on our smarts. But it’s not all bad. It’s evolution’s way of helping us think quickly so we cope when faced with the hecticness of life. But it means we sometimes make mistakes.

Cognitive biases exist to help us navigate a world saturated with things to know, to understand, to act on, and to remember.

There are four higher-order issues we face when trying to take in information rationally, that trigger our biases. Firstly, that there is too much of it. Secondly, that it’s not clear. Thirdly, that we need to decide or act fast. Fourthly, that we can’t remember everything. When faced with these things, our biases step in, in an effort to help out.

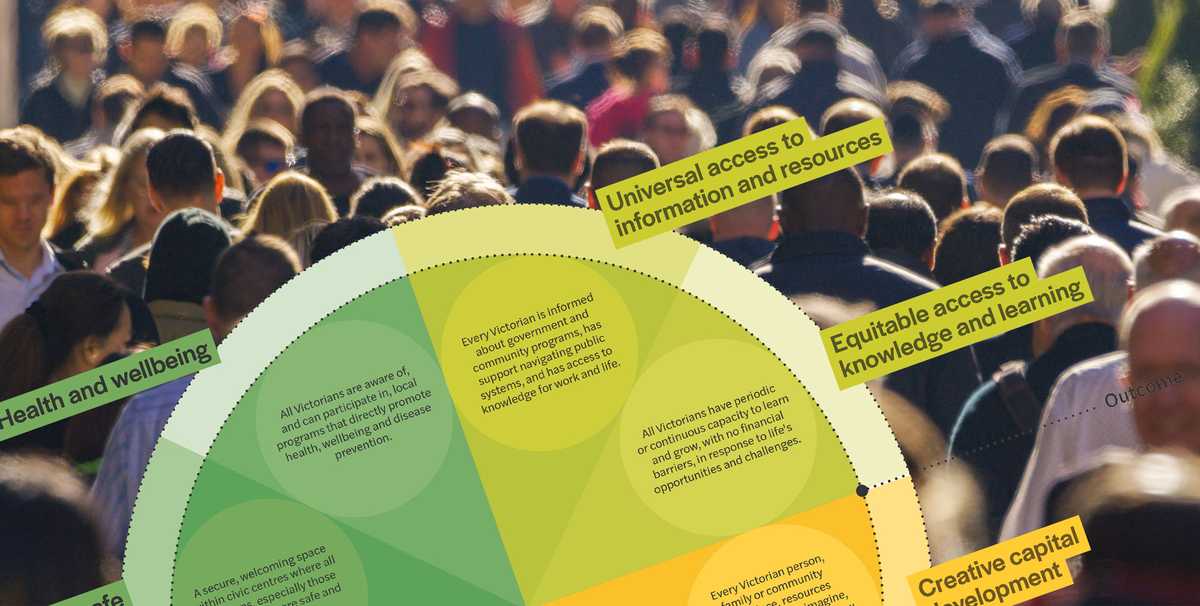

Diagram: Why we respond to new stuff with cognitive biases (sourced from Buster Benson, who has incidentally been viewed thousands of times for his tidy synthesis of why thinking is hard, in fact proving the point).

Multiple cognitive biases are at play when choosing to vaccinate or not to vaccinate.

Whether we intend to get jabbed, or not, seems to trigger biases in every macro-category, but it’s the first two that steal the spotlight: that’s resisting too much with information, and then putting meaning where it isn’t. It means facts and statistics, on their own, may not be enough to change the minds of the unsure, and certainly not enough to move the unwilling.

1. Too much info.

There is no shortage of words being expended on vaccination (or COVID-19 for that matter). So, to manage, we use shortcuts that automatically filter what we take in (and what we discard). Things that are repeated often (availability bias, exposure effect), things that are unusual (bizarreness effect, negativity bias, salience bias), and things that are individual and vivid, vis a vis dry statistical evidence (base-rate fallacy) are more likely to make it through the sieve.

This set of biases is likely an important contributing factor to fears of the very rare (one in a million) but potentially severe blood clotting side-effects associated with Astra Zeneca. While the likelihood of us individually suffering this side-effect is much lower than other things we do every day, we have heard (many) reports about the few individuals (globally) for whom this has resulted in illness or death.

These stories can be more convincing than facts and figures (base-rate fallacy). Bizarre by everyday standards, these infrequent deaths are over-represented in what we hear in the news, leading our brains to overestimate the likelihood that this could happen to us (availability bias).

In combination, they make the risk seem more real, and larger, than it might be.

A related set of biases that may see us more afraid of Astra Zeneca (than of COVID-19) are those which prompt us to fill in knowledge gaps with our own conclusions. These biases see us misjudge probabilities (neglect of probability, insensitivity to sample size) and be drawn to stories rather than numbers (anecdotal fallacy), which can lead us to faulty, and over-generalised conclusions about what feels riskier.

In this case, we’ll take what we hear (say about a person of a similar age to us being afflicted with terrible side effects from a vaccine) and attribute this to a broader pattern, even when we don’t have the data to back it up.

We are even more likely to do this to avoid a course of action where the outcome could be catastrophic (seems fair), but we do this regardless of the probability of that outcome. Fear of getting on a plane vs getting in a car (despite which is more likely to result in you being in an accident) can be an example of this.

Likewise, even though a real risk of contracting COVID-19 may be all around us, we may not feel as likely to die from it, as we do from the sensational but rare vaccine side effects.

These, in combination with our need to act fast but also a fear of irreversible decisions (e.g. status quo bias) along with the emotive and creative ways we determine what we remember (e.g. confirmation bias, suggestibility, primacy and recency effect) mean it makes sense that large numbers of people, relying on cognitive shortcuts, can find themselves reaching unhelpful conclusions.

Nudging not information may provoke a better response.

So far in Australia, it feels like we’ve been bombarded with information telling us more and more stuff, much of it unhelpful and not terribly clear (e.g. Scott Morrison choosing Pfizer while, at that stage of the rollout, his age, placed in the Astra Zeneca queue).

Some advertisers have taken a similar approach, albeit injecting some humour with a focus on getting young people clamouring for whichever vaccine they could get their hands on (or into their arms).

But given all the biases we’ve just explored, I wonder if, right now, we could actually deal with any more information, any more choices, or anything more at all which feels hard or counterintuitive? Which forces our hard-working brain to have to do more heavy lifting.

Data may hold the answers. But our brains aren’t necessarily wired to use it.

Our emotions, our resilience is maxed out. Our lazy brain might be all we have left. Nudging it, by making the change feel easy, by creating simple incentives and small environmental changes might be a better response. It can change behaviour (even where it doesn’t change minds).

Emotional carrots, like identity, replace hard rational judgments. Clear, easy-to-use systems, staffed by people we trust, replace convoluted bureaucracies. Hope replaces fear. And getting a vaccine, of any variety, starts to smell like roses.